Precision agriculture (PA) refers to using modern tools – GPS-guided machinery, soil sensors, drones, data analytics and even robots – to manage each part of a farm field in the most efficient way. Instead of treating an entire field uniformly, farmers can test soil and crop health in small zones and apply water, fertiliser or pesticides exactly where they are needed. This approach boosts yields and cuts waste: for example, on many farms precision techniques can cut fertiliser use by 15–20% while raising yields 5–20%. Smart sprayers using cameras can reduce herbicide use by up to 14%.

In the UK, precision farming also means meeting climate and nature goals while keeping farms profitable. However, adoption has been slower than hoped. Costs are high and many farmers lack the training or proof of value needed to invest. Now the government has unveiled a major package of incentives in 2026 – bigger farm support payments (SFI26) plus grants for equipment. The core question is: can these new incentives really shift farmer behaviour at scale? The evidence suggests yes, if they are well-targeted and combined with other support.

The timing is urgent. UK farms face rising costs for fuel, fertiliser and labour, and at the same time must cut greenhouse gases and protect wildlife. Precision tools can help on both fronts. A recent market study found the UK precision farming market was about $307 million in 2024 and is projected to grow to $710 million by 2033 at ~9.8% annual growth. This growth indicates strong interest in the technology.

Yet on-farm take-up remains uneven. Large arable farms (especially in East Anglia) are already using GPS steering and soil sensors, but many smaller family farms are still “paper plans” rather than data-driven. Industry surveys show around 45% of farmers cite unclear returns on investment and high upfront costs as key barriers. Only about one in five farmers have so far invested in agri-tech. Without help, switching every farm to precision methods could take a decade or more. That is why the new 2026 incentives – simplified subsidy schemes plus targeted grants – aim to tilt the economics and risk in farmers’ favour.

The Current State of Precision Agriculture in the UK

Precision farming use is growing but still far from universal. The Adoption of specific technologies varies widely by farm type and region. For example, GPS automatic steering and field mapping are common on large arable holdings, but less so on small mixed or livestock farms. In a recent UK farm survey, farmers said they plan to boost precision ag by 2026, but actual uptake lags. One report noted “around half of farmers surveyed cited high costs and uncertain returns as barriers”. Another found about 20% of farms had adopted any agri-tech, reflecting that many smaller farms cannot yet afford or integrate these tools.

Size matters. Larger farms (hundreds of hectares) are far more likely to have yield monitors, variable-rate spreaders, soil probes and drones. These farms already use data for decisions – one industry leader noted that 75% of large farms now use some data tools. By contrast, on smaller farms (under 50 ha) adoption is much lower: often less than 20–30%. Regional differences appear too: highly mechanized areas like East Anglia and Lincolnshire see more precision use, whereas smaller mixed farms in Wales, Scotland or hilly regions stick to traditional methods.

The types of technology also vary. GPS auto-steer is one of the most common tools, but even that may be on only a quarter of tractors on small farms. Sensors (soil and weather stations) are still rare outside trials. Satellite or drone imagery is growing (many farmers now reference free NDVI maps), but active drone spraying or robotic weeding is still uncommon. In the UK, variable-rate fertiliser application and precision sprayers have been pioneered on some cereal farms, but penetration remains modest. Overall, most farmers are aware of precision options, but many are waiting for clear evidence or support to invest.

Barriers Limiting Adoption Without Strong Incentives

Several interlocking barriers have held UK farmers back from precision ag, especially smaller and medium-sized farms. The biggest hurdle is cost. New equipment like robot weeders, drones or advanced seed drills can cost tens of thousands of pounds. Many farms cannot make that investment without help – especially after years of low profits, floods or high energy prices. Surveys repeatedly find that a lack of affordable financing and unclear payback is a top reason cited by farmers.

One UK agri-tech report noted nearly half of farmers said unclear return on investment was a key barrier. In practice, a new precision sprayer or variable-rate spreader must save enough in fertiliser or labour to cover its own cost, and on marginal crop margins that is risky without a subsidy.

Skills and knowledge gaps also slow adoption. Precision tools generate lots of digital data: mapping fields, analysing satellite images, or running smartphone apps. Many farmers (especially older ones) find this new digital farming approach daunting. Training and advice lag behind the technologies. There is no single “plug-and-play” solution: a farmer needs to know how to interpret yield maps or calibrate sensors. Studies of UK farmers find that lack of digital skills and support is a key reason to stick with tried-and-true methods.

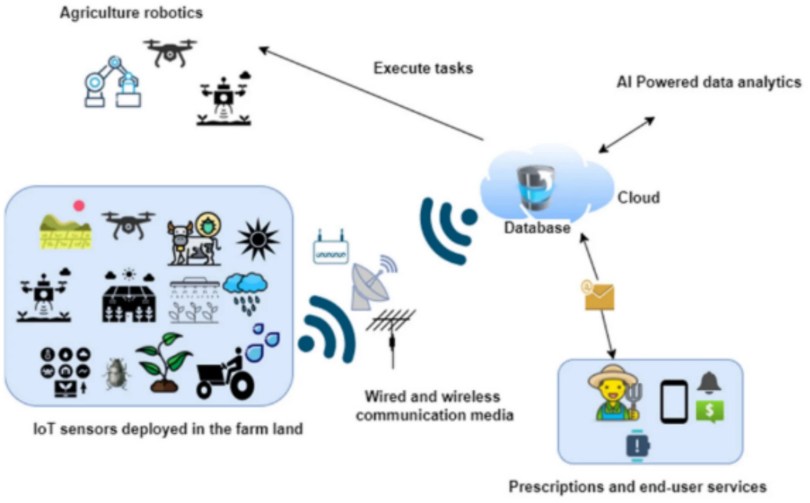

Connectivity issues make digital farming harder in the countryside. Good internet and mobile coverage is often needed for cloud-based agronomy apps and real-time data feeds. But rural connectivity is patchy. A 2025 NFU survey reported only 22% of farmers have reliable mobile signal across their whole farm, and about one in five farms still have less than 10 Mbps broadband. This means a drone or sensor that needs an online data link can be frustrating or impossible on many farms. Poor Wi-Fi or 4G signals leave some farmers unwilling to rely on apps or real-time weather data – a fundamental hurdle that farm incentives alone can’t fix.

Other issues include risk aversion and culture. Farming tends to value consistency. Trying a new system that can fail (say, robot weeding not working) can scare farmers who cannot afford a crop loss. There are also data trust and ownership concerns. Who owns the field data – the farmer, the equipment maker or an app provider? Without clear standards, some farmers worry about giving away their crop data or being locked into one company’s platform. This adds a layer of hesitation, since “getting on the wrong tractor” or software could lead to costly headaches.

Existing UK Incentives and Policy Framework

Historically, UK farm support was mainly through direct payments tied to land area (the old EU Basic Payment Scheme). Since Brexit, these are being phased out and replaced by more conditional schemes. The flagship is Environmental Land Management (ELM) payments run by DEFRA. ELM has multiple strands (Sustainable Farming Incentive, Countryside Stewardship, Landscape Recovery) rewarding farmers for environmental benefits. The idea is to pay farmers for outcomes like better soil health, cleaner water or more wildlife. Precision agriculture can help achieve those outcomes, but only if farmers adopt the tools – hence the interest in linking incentives.

Until 2024, the Sustainable Farming Incentive (SFI) had dozens of possible actions (cover crops, hedges, etc) that farmers could sign up for. Many of these actions generate data (like cover crop photos, soil tests). But the link to technology was indirect. Farmers might get paid per hectare for doing an action but had little extra support to invest in new machines. That meant SFI alone didn’t give a big boost to buying sensors or drones – it mainly encouraged land use changes.

There were some precision-friendly actions (e.g. measuring nutrient levels) but no direct equipment grants. Meanwhile, DEFRA has run small grant pilots (the Farming Innovation Programme etc) to test new tech on farms, but uptake was limited without scaling.

Recent UK policy has explicitly recognized these gaps. In 2024-25 the government assembled a £345 million investment package for farming productivity and innovation. Within that, some ELM funding is earmarked for tech adoption. Key elements include:

1. A revamped Sustainable Farming Incentive (SFI26) to start mid-2026. This new scheme is much simpler: only 71 actions instead of 102, with a £100,000 per-farm cap to spread money more evenly. Crucially, SFI26 keeps three direct precision-farming actions with clear per-hectare payments. For example, it pays £27/ha for variable-rate nutrient application (applying fertiliser based on soil maps) and £43/ha for targeted spraying using camera or sensors.

The most generous is £150/ha for robotic mechanical weeding (removing weeds by machine rather than spraying). These payments effectively reward farmers each year for using precision methods. In addition, the SFI26 focus is on “doing and documenting” outcomes – meaning farmers using tech (drones, photos, sensors) can more easily prove their work and get paid.

2. Equipment grants. The Farming Equipment and Technology Fund (FETF) offers £50 million in capital grants (rounds in 2026) specifically for precision tools: GPS systems, robotic planters, drone sprayers, smart slurry mixers, etc. Farmers apply for a share of this to buy new machines.

3. ELM Capital Grants open in mid-2026 with £225 million for broader investments (water tanks, storage, low-emission equipment) that often complement precision tech. Together, these grants directly lower the upfront cost of precision gear, while SFI payments give a recurring income boost for using it.

4. Innovation and advisory support. A £70m Farming Innovation Programme is accelerating lab research into farm-ready tools. And Defra is offering new advice services and a free nutrient-management app to help farmers learn precision techniques. These non-cash incentives aim to build skills and create markets, making technology adoption less daunting.

What “New Incentives” Could Look Like

New incentives can be both financial (grants, payments, tax breaks) and technical (data, training, networks). The recent policy moves already cover much ground, but ongoing debate suggests broadening support beyond single-year payments: moving toward rewarding actual environmental and efficiency outcomes, and building the digital backbone (connectivity, data systems, skills) that makes precision tools usable.

1. More targeted capital grants or loans. The FETF and ELM grants are a good start, but some farmers want even larger or longer-term financing. Proposals include tax incentives (e.g. accelerated depreciation on ag-tech purchases) or low-interest green loans for precision equipment. For instance, government could allow 100% first-year depreciation on ag-tech assets for tax purposes. This would lower the effective cost of machines for farms with profit taxes.

2. Outcome-based payments linked to efficiency or sustainability targets. Instead of flat per-hectare rates, farmers could earn bonuses for measured gains. For example, a payment for reducing fertiliser use by X% while maintaining yield, or for cutting carbon emissions on the farm. A move toward these “results” payments would make precision tools more attractive, as the better the tech works, the more subsidy the farmer gets. In effect, this would be a pay-for-performance scheme requiring data logs (which only precision ag provides easily).

3. Data platforms and interoperability support. A common complaint is that different machines and software don’t talk to each other. The government or industry consortia could fund open data platforms or standards so that a drone map can feed any farm app, or results from one tool can integrate with another. Grants or vouchers for subscribing to farm-management software could also be offered. This lowers the “soft cost” of adoption by making it easier to use multiple technologies together.

4. Skills and training incentives. Training grants for farmers (like voucher-funded courses on digital farming) and subsidies for advisory services could be expanded. Some experts propose mobile “precision farms” or demo days where farmers earn credit for visiting. Putting graduate agronomists or engineers on farms (funded partly by government) would give on-the-ground help to test and learn new tech.

5. Collaborative or co-investment models. Encouraging farms to pool investments or lease equipment could spread costs. For example, a scheme where farmers share a drone service, or co-own a robot, with initial capital subsidized by grant. The UK’s Agri-EPI Centre already runs leasing trials. New incentives might explicitly support co-ops buying AI or robotics for groups of farms.

Lessons from Other Countries and Sectors

Other nations’ experiences show how incentives can move the needle, and what pitfalls to avoid:

1. United States:

The US Farm Bill and conservation programs now explicitly cover precision farming. For example, recent US legislation added precision equipment and data analysis under the Environmental Quality Incentives Program (EQIP) and Conservation Stewardship Program (CSP), with cost-share rates up to 90% for technology adoption. In practice, American farmers can apply for huge rebates on precision seeders or variable-rate applicators, offsetting the high cost.

The US also funds ag-tech R&D aggressively, creating spin-outs that benefit farmers. These policies have boosted US tech adoption rates, especially on larger farms. However, even in the US, uptake on small farms is less than ideal unless incentives are well-targeted.

2. European Union:

The EU’s Common Agricultural Policy (CAP) now includes “eco-schemes” and innovation funds that reward precision farming in the context of sustainability goals. For example, French and German farmers can get CAP payments for precision watering or biodiversity monitoring using smart tools. EU initiatives also fund data sharing projects (like the European Agricultural Data Space) to make digital tools more accessible.

The lesson is that tying tech adoption to climate and biodiversity goals can justify public money to farmers, as seen in CAP’s “green architecture”. However, uniform EU rules also mean member states must ensure small farms aren’t left behind by big machines, a balance UK policy can emulate with its £100k cap.

3. Australia:

The Australian government and states have supported precision farming through research grants and tax incentives. Agencies like the Cooperative Research Centres (CRC) and Rural R&D Corporations have poured funds into agri-tech, benefiting tools tailored to Australian crops. Farmers can often get rebates for adopting water-saving precision irrigation or drones.

Even though Australia’s conditions differ (e.g. more arid land, larger farms), the key lesson is the combination of R&D funding and on-farm trials. Programs that help transition a prototype into a commercial product on real farms have accelerated adoption there.

Other sectors:

We can draw analogies to sectors like electric vehicles or renewable energy, where government incentives (grants, tax credits) drastically raised adoption. In the EV space, subsidies quickly pushed sales from niche to mainstream. A similar idea in farming is “get the first movers on board with generous support, then the rest follow”. Public-private partnerships have worked in fields like water-efficient irrigation, and could work for precision ag.

For instance, telecom companies sometimes team with governments to upgrade rural broadband; similarly, there could be joint schemes with private tech firms to deploy agri-tech. Across these examples, effective incentive design often means:

- High cost-share early on for new tech (like the US 90% cost-share) to overcome initial skepticism.

- Clear outcome metrics tied to payments (so farmers see exactly what they gain by doing X technology).

- Focus on smaller farmers and “late adopters” with dedicated windows or higher rates, to avoid widening the farm-size gap.

- Non-financial supports (extension services, interoperability standards) alongside the money.

Potential Impacts of Stronger Incentives

With well-designed incentives, the potential upside is large: more efficient, sustainable farming with a solid data backbone for the future. But this assumes the incentives are targeted carefully (to smaller farms and outcome metrics), and that supports like training keep pace. If not, the risk is new incentives mainly boosting the biggest operators and adding admin burden to small farms with little gain. If new incentives succeed in accelerating adoption, the impacts could be significant:

Productivity and profitability gains. Farmers who use precision tools often report better yields or lower input costs. For example, trials of variable-rate fertiliser and no-till in the UK have shown as much as 15% lower fertiliser use with stable or higher yields.

With new incentives, industry experts project an arable farm using cover crops, no-till and variable-rate nutrients could gain £45,000+ per year in SFI payments alone. Over time, these efficiency gains could boost overall farm margins. Smaller farms would especially benefit from the £100k cap ensuring they get a share of these gains.

Environmental benefits. Precision ag is often touted as “grow more with less”. Less wasted fertiliser and pesticide means lower nutrient runoff and water pollution. Early adopters in East Anglia using government-supported variable-rate spreading reported 15% less fertiliser use and healthier soils.

Robots instead of herbicides reduce chemical load in fields. By 2030, more precision farms could help the UK meet targets like cutting agricultural nitrogen pollution and methane. Additionally, detailed field data from sensors and drones can improve on-farm monitoring of wildlife habitats or soil carbon – something large food buyers are beginning to demand.

Better data for national goals. Incentivised precision farming will generate a wealth of geospatial data (soil maps, yield records, greenhouse gas estimates). This data can feed into national efforts on food security and climate reporting.

For example, if many farmers map their soil organic matter, the UK could have far better national estimates of soil carbon. And tracking pesticide use by field helps verify compliance with environmental regulations. In effect, precision adoption could turn farmers into precise “data providers” who help shape agricultural policy.

Structural effects – both positive and cautionary. On the one hand, stronger incentives may accelerate mechanisation and favor larger or well-financed farms that can handle complex tech. This could risk widening the gap between big and small farms unless carefully managed (hence the cap and small-farm window in SFI26). We might see a consolidation of farm management systems, with fewer farmers controlling larger precision-enabled farms.

On the other hand, better-funded smaller farms could survive in a tightening market. As agriculture becomes more data-driven, there is a chance that smaller farmers who leverage tech might actually compete better (through better yields or targeted niche markets).

Cultural shift and innovation spillover. If technology becomes the norm on farms, we may see younger or more tech-savvy people enter farming. The private agri-tech sector might also boom: equipment suppliers and software companies will have a bigger market. Lessons learned in UK could spill overseas (British precision startups might export to other countries’ farms, for instance). Moreover, farmers who become accustomed to precise farming may be quicker to adopt other innovations (like digital livestock sensors or even genetic tools).

Role of the Private Sector and Supply Chains

Private investment and supply-chain programs can amplify government incentives. If retailers require data-backed farming practices, that creates a business incentive to adopt precision tools, often matching or exceeding public funds. Conversely, without private sector buy-in, even generous public grants may not reach every farmer (as seen in schemes where uptake was lower than expected).

The ideal scenario is a virtuous cycle: government incentives kick-start adoption, which makes the business case clearer, which then attracts more private financing and market demand for precision outputs. Government money is one piece of the puzzle – private industry and supply chains are the others. In practice, adoption will likely depend on a mix of public and private incentives:

1. Agri-tech companies and financiers. Companies that develop precision tools have a big stake. Many are offering creative financing: tractor manufacturers (John Deere, CLAAS, etc) now bundle GPS and telematics options into leases, making them more affordable. Agri-tech startups and equipment dealers may partner with banks or leasing firms to spread costs. In fact, the Angloscottish article noted a surge in farmers using finance to buy new tech.

New incentives like grants can make it easier for these companies to demonstrate ROI to farmers, which in turn can boost sales. We may also see more co-investment models, where an equipment maker or retailer shares the cost or risk of deploying a new technology on a demo farm.

2. Food processors and retailers. The supply chain can strongly influence what happens on farms. Large buyers often set sourcing standards. For example, major UK retailers and processors increasingly demand proof of low carbon or low pesticide residues. Some are now explicitly rewarding sustainable practices – for instance, offering premiums to farms that show environmental monitoring data.

Marks & Spencer’s recent “Plan A for Farming” initiative is a case in point. M&S has committed £14m to sustainable farming and innovation, and is investing in a program where 50 British farmers receive free soil, biodiversity and carbon monitoring tools to meet retailer standards. By helping farmers afford sensors and data collection, M&S (and others) essentially act as co-funders of precision ag. Similarly, food processors might pay more for inputs from farms that can prove efficient water and chemical use.

3. Industry groups and partnerships. Bodies like the Agri-Tech Centre, InnovateUK and supply-chain alliances can help match farms with technology. Grant programs (like Innovate UK’s Agri-Tech Catalyst) often require collaboration between farmers, tech firms and universities. These partnerships can reduce risk by pooling knowledge. Trade groups can also negotiate bulk discounts for members: for instance, a farmers’ co-op might organize a single purchase of a drone or weather station platform for all its members, with some subsidy.

4. Financial sector innovation. Agricultural banks and insurers have a role too. Insurance products might reward farms that use precision controls (lower risk, lower premiums). Banks and fintech firms could offer loans tied to grant eligibility (e.g. a loan forgiven if matched by a grant). We already see some fintech offerings for equipment leasing; new incentives might encourage more competition in that space.

Measuring Success: How to Know if Incentives Are Working

To judge whether new incentives truly accelerate precision farming, we need clear metrics. By combining these indicators, policymakers and industry can gauge effectiveness. Ultimately, success means not just more equipment on farms, but verifiable environmental gains and improved farm finances. It will likely take several years of data (2026–2030) to see the full picture of impact. Ongoing monitoring and evaluation will be key, with a willingness to adjust incentives if certain goals aren’t being met. Possible measures include:

1. Adoption rates and usage: These could include the percentage of farms reporting use of specific technologies (e.g. % of fields managed with variable-rate equipment, % of farms using yield mapping or drones). Government surveys (like those done by Defra or industry bodies) should track these over time. But raw adoption counts can be misleading if farms only tick a box without real change. So it’s important to measure meaningful use – for example, not just owning a GPS system, but using it to cut input rates.

2. Farm productivity and cost metrics: Changes in average input usage per hectare, yields, profits or labor hours could indicate impact. If farmers on average need 20% less fertiliser per tonne of crop, that suggests precision tools are making a difference. These figures could be reported via annual statistics or pilot program results. One could track, say, reductions in fertilizer bought per farm per year, or improvements in profit per hectare, though many factors influence these.

3. Environmental and sustainability indicators: Since one goal is greener farming, measuring things like nitrogen runoff, pesticide usage, soil organic carbon or greenhouse gas emissions on participating farms would show if precision tools help meet targets. For example, Defra might compare nitrate levels in water catchments where many farms adopt variable-rate spreading versus others.

4. Economic ROI and farmer satisfaction: Surveys of farmers in the schemes could assess whether the financial incentives outweigh costs. A key measure is whether farmers who adopted precision under incentive schemes actually renew their investments later. If a year after SFI26 some farms drop the tech (because it didn’t help enough), that would be a red flag. On the other hand, positive case studies (farmers saying “we saved X and cut our fertiliser bill”) help justify the incentives.

5. Equity of access: Another measure is who benefits. For example, statistics on how many small vs large farms applied for and received grants or actions would indicate if the cap and windows are working as intended. If small farms remain under-represented, that suggests tweaks are needed.

6. Administrative and training uptake: The success of support measures (like new training programs or data platforms) can be tracked too. Metrics could include number of farmers trained in digital skills, or percentage of farms using the new nutrient planning app (since DEFRA launched a free nutrient-management tool for variable-rate inputs).

Conclusion

The new 2026 incentives address the core adoption barriers and put precision tools at the heart of farming payments. Early indicators are positive: many farms are enrolling in SFI26 and asking for tech grants, showing that the system is steering behavior. If these policies remain stable and adaptable, and if follow-through supports the digital transition, we can expect a step-change in how UK farming operates. Widespread precision agriculture adoption may not happen overnight, but the trajectory is set. With the right mix of incentives, collaboration and oversight, the answer to whether incentives can accelerate adoption appears to be yes – especially when paired with continued private and industry support.