Highland barley, a resilient cereal crop grown in the high-altitude regions of China’s Qinghai-Tibet Plateau, plays a critical role in local food security and economic stability. Known scientifically as Hordeum vulgare L., this crop thrives in extreme conditions—thin air, low oxygen levels, and an average annual temperature of 6.3°C—making it indispensable for communities in harsh environments.

With over 270,000 hectares dedicated to its cultivation in China, primarily in the Xizang Autonomous Region, highland barley accounts for more than half of the region’s planted area and over 70% of its total grain production. Accurate monitoring of barley density—the number of plants or spikes per unit area—is essential for optimizing agricultural practices, such as irrigation and fertilization, and predicting yields.

However, traditional methods like manual sampling or satellite imaging have proven inefficient, labor-intensive, or insufficiently detailed. To address these challenges, researchers from Fujian Agriculture and Forestry University and Chengdu University of Technology developed an innovative AI model based on YOLOv5, a cutting-edge object-detection algorithm.

Their work, published in Plant Methods (2025), achieved remarkable results, including a 93.1% mean average precision (mAP)—a metric measuring overall detection accuracy—and a 75.6% reduction in computational costs, making it suitable for real-time drone deployments.

Challenges and Innovations in Crop Monitoring

The importance of highland barley extends beyond its role as a food source. In 2022 alone, Rikaze City, a major barley-producing region, harvested 408,900 tons of barley across 60,000 hectares, contributing nearly half of Tibet’s total grain output.

Despite its cultural and economic significance, estimating barley yields has long been challenging. Traditional methods, such as manual counting or satellite imagery, are either too labor-intensive or lack the resolution needed to detect individual barley spikes—the grain-bearing part of the plant, which are often just 2–3 centimeters wide.

Manual sampling requires farmers to physically inspect sections of a field—a process that is slow, subjective, and impractical for large-scale farms. Satellite imagery, while useful for broad observations, struggles with low resolution (often 10–30 meters per pixel) and frequent weather disruptions, such as cloud cover in mountainous regions like Tibet.

To overcome these limitations, researchers turned to unmanned aerial vehicles (UAVs), or drones, equipped with 20-megapixel cameras. These drones captured 501 high-resolution images of barley fields in Rikaze City during two critical growth stages: the growth stage in August 2022, characterized by green, developing spikes, and the maturation stage in August 2023, marked by golden-yellow, harvest-ready spikes.

However, analyzing these images posed challenges, including blurred edges caused by drone motion, the small size of barley spikes in aerial views, and overlapping spikes in densely planted fields.

To address these issues, researchers preprocessed the images by splitting each high-resolution image into 35 smaller sub-images and filtering out blurry edges, resulting in 2,970 high-quality sub-images for training. This preprocessing step ensured the model focused on clear, actionable data, avoiding distractions from low-quality regions.

Technical Advancements in Object Detection

Central to this research is the YOLOv5 algorithm (You Only Look Once version 5), a one-stage object-detection model known for its speed and modular design. Unlike older two-stage models like Faster R-CNN, which first identify regions of interest and then classify objects, YOLOv5 performs detection in a single pass, making it significantly faster.

The baseline YOLOv5n model, with 1.76 million parameters (configurable components of the AI model) and 4.1 billion FLOPs (floating-point operations, a measure of computational complexity), was already efficient. However, detecting tiny, overlapping barley spikes required further optimization.

The research team introduced three key enhancements to the model: depthwise separable convolution (DSConv), ghost convolution (GhostConv), and a convolutional block attention module (CBAM).

Depthwise separable convolution (DSConv) reduces computational costs by splitting the standard convolution process—a mathematical operation that extracts features from images—into two steps. First, depthwise convolution applies filters to individual color channels (e.g., red, green, blue), analyzing each channel separately.

This is followed by pointwise convolution, which combines results across channels using 1×1 kernels. This approach slashes parameter counts by up to 75%.

For example, a traditional 3×3 convolution with 64 input and 128 output channels requires 73,728 parameters, while DSConv reduces this to just 8,768—an 88% reduction. This efficiency is critical for deploying models on drones or mobile devices with limited processing power.

Ghost convolution (GhostConv) further lightens the model by generating additional feature maps—simplified representations of image patterns—through simple linear operations, such as rotation or scaling, instead of resource-heavy convolutions.

Traditional convolution layers produce redundant features, wasting computational resources. GhostConv addresses this by creating “ghost” features from existing ones, effectively halving the parameters in certain layers.

For instance, a layer with 64 input and 128 output channels would traditionally require 73,728 parameters, but GhostConv reduces this to 36,864 while maintaining accuracy. This technique is especially useful for detecting small objects like barley spikes, where computational efficiency is paramount.

The convolutional block attention module (CBAM) was integrated to help the model focus on critical features, even in cluttered environments. Attention mechanisms, inspired by human visual systems, allow AI models to prioritize important parts of an image.

CBAM employs two types of attention: channel attention, which identifies important color channels (e.g., green for growing spikes), and spatial attention, which highlights key regions within an image (e.g., clusters of spikes). By replacing standard modules with DSConv and GhostConv and incorporating CBAM, the researchers created a leaner, more precise model tailored for barley detection.

Implementation and Results

To train the model, researchers manually labeled 135 original images using bounding boxes—rectangular frames marking the location of barley spikes—categorizing spikes into growth and maturation stages. Data augmentation techniques—including rotation, noise injection, occlusion, and sharpening—expanded the dataset to 2,970 images, improving the model’s ability to generalize across diverse field conditions.

For example, rotating images by 90°, 180°, or 270° helped the model recognize spikes from different angles, while adding noise simulated real-world imperfections like dust or shadows. The dataset was split into a training set (80%) and a validation set (20%), ensuring robust evaluation.

Training took place on a high-performance system with an AMD Ryzen 7 CPU, NVIDIA RTX 4060 GPU, and 64GB RAM, using the PyTorch framework—a popular tool for deep learning. Over 300 training epochs (complete passes through the dataset), the model’s precision (accuracy of correct detections), recall (ability to find all relevant spikes), and loss (error rate) were meticulously tracked.

The results were striking. The improved YOLOv5 model achieved a precision of 92.2% (up from 89.1% in the baseline) and a recall of 86.2% (up from 83.1%), outperforming the baseline YOLOv5n by 3.1% in both metrics. Its mean average precision (mAP)—a comprehensive metric averaging detection accuracy across all categories—reached 93.1%, with individual scores of 92.7% for growth-stage spikes and 93.5% for maturation-stage spikes.

Equally impressive was its computational efficiency: the model’s parameters dropped by 70.6% to 1.2 million, and FLOPs decreased by 75.6% to 3.1 billion. Comparative analyses with leading models like Faster R-CNN and YOLOv8n highlighted its superiority.

While YOLOv8n achieved a slightly higher mAP (93.8%), its parameters (3.0 million) and FLOPs (8.1 billion) were 2.5x and 2.6x higher, respectively, making the proposed model far more efficient for real-time applications.

Visual comparisons underscored these advancements. In growth-stage images, the improved model detected 41 spikes compared to the baseline’s 28. During maturation, it identified 3 spikes versus the baseline’s 2, with fewer missed detections (marked by orange arrows) and false positives (marked by purple arrows).

These improvements are vital for farmers relying on accurate data to predict yields and optimize resources. For instance, precise spike counts enable better estimates of grain production, informing decisions about harvest timing, storage, and market planning.

Future Directions and Practical Implications

Despite its success, the study acknowledged limitations. Performance dipped under extreme lighting conditions, such as harsh midday glare or heavy shadows, which can obscure spike details. Additionally, rectangular bounding boxes sometimes failed to fit irregularly shaped spikes, introducing minor inaccuracies.

The model also excluded blurry edges from UAV images, requiring manual preprocessing—a step that adds time and complexity.

Future work aims to address these issues by expanding the dataset to include images captured at dawn, noon, and dusk, experimenting with polygon-shaped annotations (flexible shapes that better fit irregular objects), and developing algorithms to better handle blurry regions without manual intervention.

The implications of this research are profound. For farmers in regions like Tibet, the model offers real-time yield estimation, replacing labor-intensive manual counts with drone-based automation. Distinguishing between growth stages enables precise harvest planning, reducing losses from premature or delayed harvesting.

Detailed data on spike density—such as identifying underpopulated or overcrowded areas—can inform irrigation and fertilization strategies, reducing water and chemical waste. Beyond barley, the lightweight architecture holds promise for other crops, such as wheat, rice, or fruits, paving the way for broader applications in precision agriculture.

Zaključek

In conclusion, this study exemplifies the transformative potential of AI in addressing agricultural challenges. By refining YOLOv5 with innovative lightweight techniques, the researchers have created a tool that balances accuracy and efficiency—critical for real-world deployment in resource-constrained environments.

Terms like mAP, FLOPs, and attention mechanisms may seem technical, but their impact is deeply practical: they enable farmers to make data-driven decisions, conserve resources, and maximize yields. As climate change and population growth intensify pressure on global food systems, such advancements will be indispensable.

For the farmers of Tibet and beyond, this technology represents not just a leap in agricultural efficiency, but a beacon of hope for sustainable food security in an uncertain future.

Reference: Cai, M., Deng, H., Cai, J. et al. Lightweight highland barley detection based on improved YOLOv5. Plant Methods 21, 42 (2025). https://doi.org/10.1186/s13007-025-01353-0

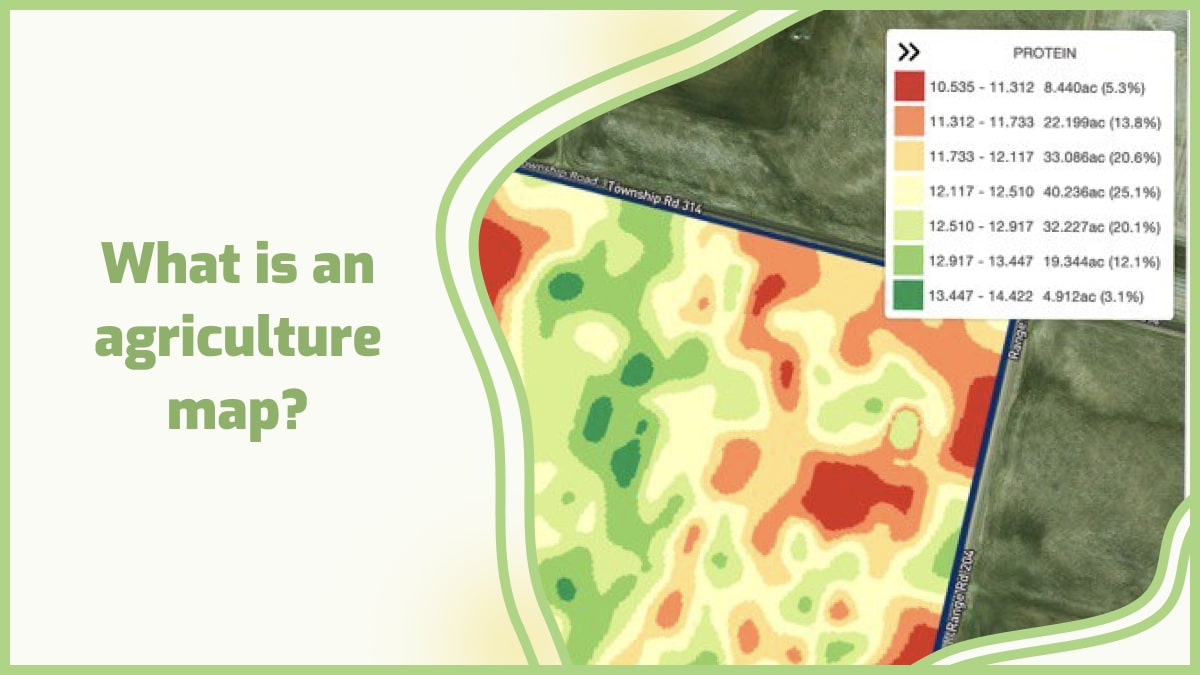

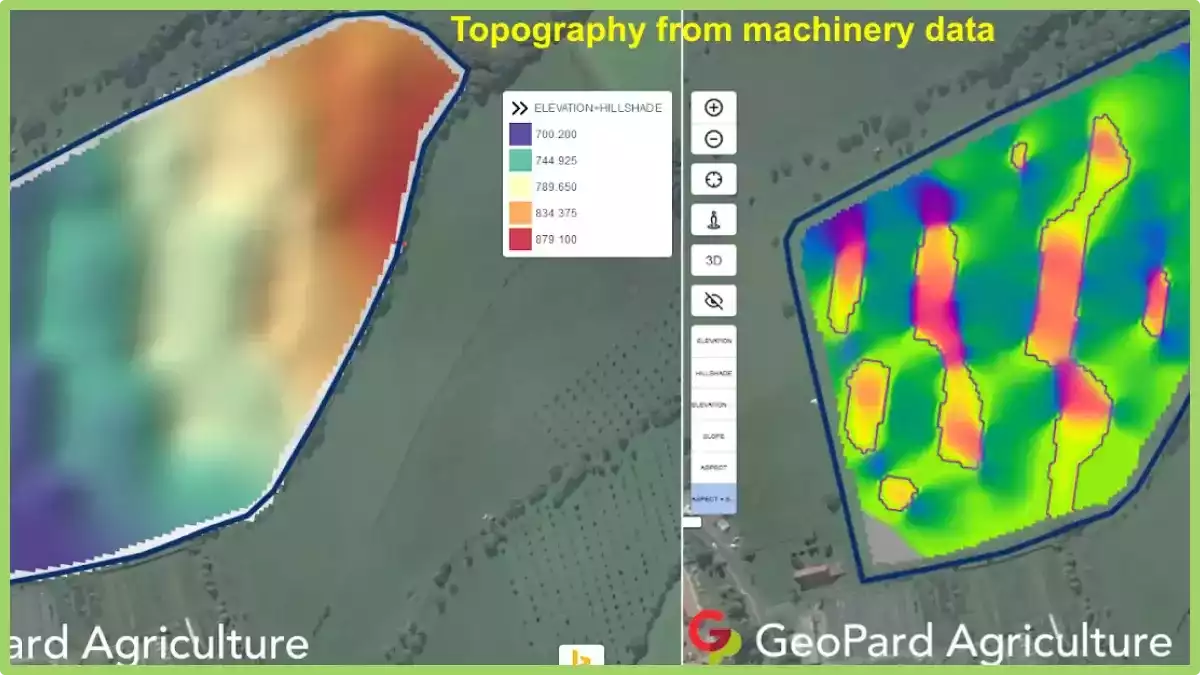

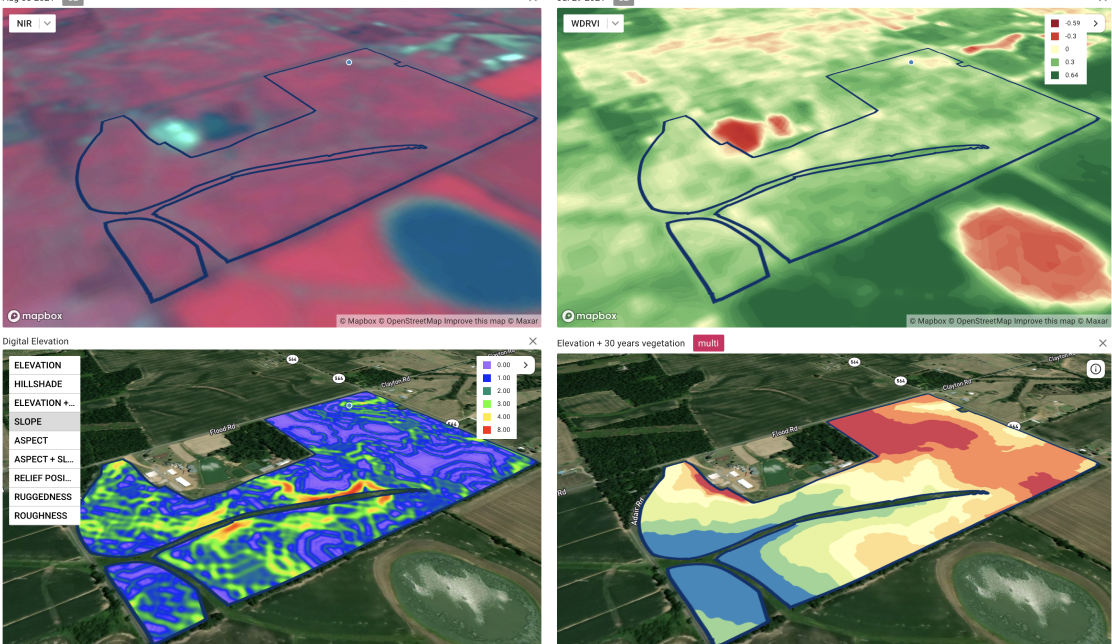

Here is how drone mapping works: a drone is mounted with sensors such as cameras and laser scanners that fly over an area capturing images or scanning it with lasers at various altitudes and angles. The collected data is then processed into 3D maps which can be viewed on a computer or smartphone screen.

Here is how drone mapping works: a drone is mounted with sensors such as cameras and laser scanners that fly over an area capturing images or scanning it with lasers at various altitudes and angles. The collected data is then processed into 3D maps which can be viewed on a computer or smartphone screen.

2. Prescription maps for fertilizers, herbicides, and pesticides with drone survey

Just one strategy is out of date, as it not only wastes resources, but it can also affect the health and vitality of crops. Too much water, for example, can kill an otherwise healthy crop by preventing its roots from absorbing oxygen, so even watering isn’t the best approach to growing flawless crops.

The same is true for fertilizers; using the correct amount is critical for growth, as using too many causes burnt roots, which can destroy otherwise healthy plants.

Drone mapping allows sprays to be splattered only where the problem exists, reducing waste of resources and the risk of harming healthy crops that do not require the same treatment. While humans would be unable to recognize the unique requirements of each plant in their crop, drone survey technology can do it in minutes.

3. Crop assessment

At the touch of a button, scouting missions are launched; the drone departs the weatherproof charging station, collects data, and uploads it. The findings of the drone, as well as a study of its plant stress detection and the efficacy of any current treatments or amendments, can be used to adapt automated irrigation systems. With on-site scouting drones, constant health checks are possible.

4. Plant population count

With the drone’s powerful AI technology, any variety of plants may be identified. This allows the entire production and total loss to be determined at the start and conclusion of each season, increasing precision and awareness of the growing season’s success.

5. Automatic classifications with drone imaging

The drone imaging can tell what type of agricultural land it’s flying over, whether it’s arable, pastoral, or mixed. Drones may count the number of crops and livestock, as shown above, to verify that records are current and that any losses are noted.

6. Tracking crops

Crop health isn’t predetermined because environmental factors might influence development. Temperature, humidity, nutritional and trace mineral content, insect and disease presence, water availability, and amounts of sun exposure are all elements to consider.

All of these may be tracked using the drones’ different payloads, and many of these intangible variables can be handled by applying water or sprays directly to the needed regions.

The healthier the crop’s surroundings, the stronger its immune system gets, and thus the healthier it becomes — with a far greater ability to ward off pests and diseases.

2. Prescription maps for fertilizers, herbicides, and pesticides with drone survey

Just one strategy is out of date, as it not only wastes resources, but it can also affect the health and vitality of crops. Too much water, for example, can kill an otherwise healthy crop by preventing its roots from absorbing oxygen, so even watering isn’t the best approach to growing flawless crops.

The same is true for fertilizers; using the correct amount is critical for growth, as using too many causes burnt roots, which can destroy otherwise healthy plants.

Drone mapping allows sprays to be splattered only where the problem exists, reducing waste of resources and the risk of harming healthy crops that do not require the same treatment. While humans would be unable to recognize the unique requirements of each plant in their crop, drone survey technology can do it in minutes.

3. Crop assessment

At the touch of a button, scouting missions are launched; the drone departs the weatherproof charging station, collects data, and uploads it. The findings of the drone, as well as a study of its plant stress detection and the efficacy of any current treatments or amendments, can be used to adapt automated irrigation systems. With on-site scouting drones, constant health checks are possible.

4. Plant population count

With the drone’s powerful AI technology, any variety of plants may be identified. This allows the entire production and total loss to be determined at the start and conclusion of each season, increasing precision and awareness of the growing season’s success.

5. Automatic classifications with drone imaging

The drone imaging can tell what type of agricultural land it’s flying over, whether it’s arable, pastoral, or mixed. Drones may count the number of crops and livestock, as shown above, to verify that records are current and that any losses are noted.

6. Tracking crops

Crop health isn’t predetermined because environmental factors might influence development. Temperature, humidity, nutritional and trace mineral content, insect and disease presence, water availability, and amounts of sun exposure are all elements to consider.

All of these may be tracked using the drones’ different payloads, and many of these intangible variables can be handled by applying water or sprays directly to the needed regions.

The healthier the crop’s surroundings, the stronger its immune system gets, and thus the healthier it becomes — with a far greater ability to ward off pests and diseases.